Beyond the Benchmark: A 2026 Guide to Building Context-Aware, Secure AI Agents

By Abo-Elmakarem Shohoud | Ailigent

As we navigate the middle of 2026, the landscape of Artificial Intelligence has shifted from a race for raw parameters to a sophisticated discipline of architectural context. The days of asking "Which is the best LLM?" are officially behind us. In today's enterprise environment, the answer is always: "It depends on your context."

There Is No “Best” LLM in 2026 — Only Context-Driven Choices

Source: Dev.to AI

There Is No “Best” LLM in 2026 — Only Context-Driven Choices

Source: Dev.to AI

At Ailigent, we have observed that the most successful implementations this year aren't the ones using the largest models, but those using the most appropriate ones. Whether you are a business owner looking to automate customer operations or a tech professional building the next generation of SaaS, understanding how to orchestrate these models through tool calling and secure frameworks is the new gold standard.

Learning Objectives

By the end of this tutorial, you will be able to:

- Evaluate LLMs based on context-driven metrics rather than generic benchmarks.

- Implement a multi-step tool-calling workflow for AI agents.

- Apply 2026-standard security protocols to prevent adversarial attacks.

- Design an AI architecture that balances cost, latency, and performance.

Section 1: The Death of the Universal "Best" Model

In 2026, Large Language Models (LLMs) have become specialized commodities. A model that excels at creative writing might fail miserably at generating deterministic JSON for financial transactions.

Context-Driven Selection is a methodology where model choice is dictated by specific operational constraints—such as data residency laws, inference budget, and millisecond-level latency requirements—rather than leaderboard rankings.

For instance, if you are building an on-device assistant for a medical professional, a 7B-parameter Small Language Model (SLM) running locally is infinitely better than a 2-trillion parameter cloud model. Why? Because in 2026, data privacy and zero-latency are non-negotiable in healthcare.

Comparison: LLM Archetypes in 2026

| Model Category | Best Use Case | Key Advantage | Trade-off |

|---|---|---|---|

| Frontier Cloud Models | Complex Reasoning / Strategy | Highest IQ & Logic | High Cost / Latency |

| Specialized SLMs | Edge Computing / Mobile | Speed & Privacy | Limited General Knowledge |

| Coding/Logic Models | Tool Calling / API Orchestration | Structural Accuracy | Less "Creative" Tone |

| Governance-Locked Models | Finance / Legal / Government | Regulatory Compliance | Slower Update Cycles |

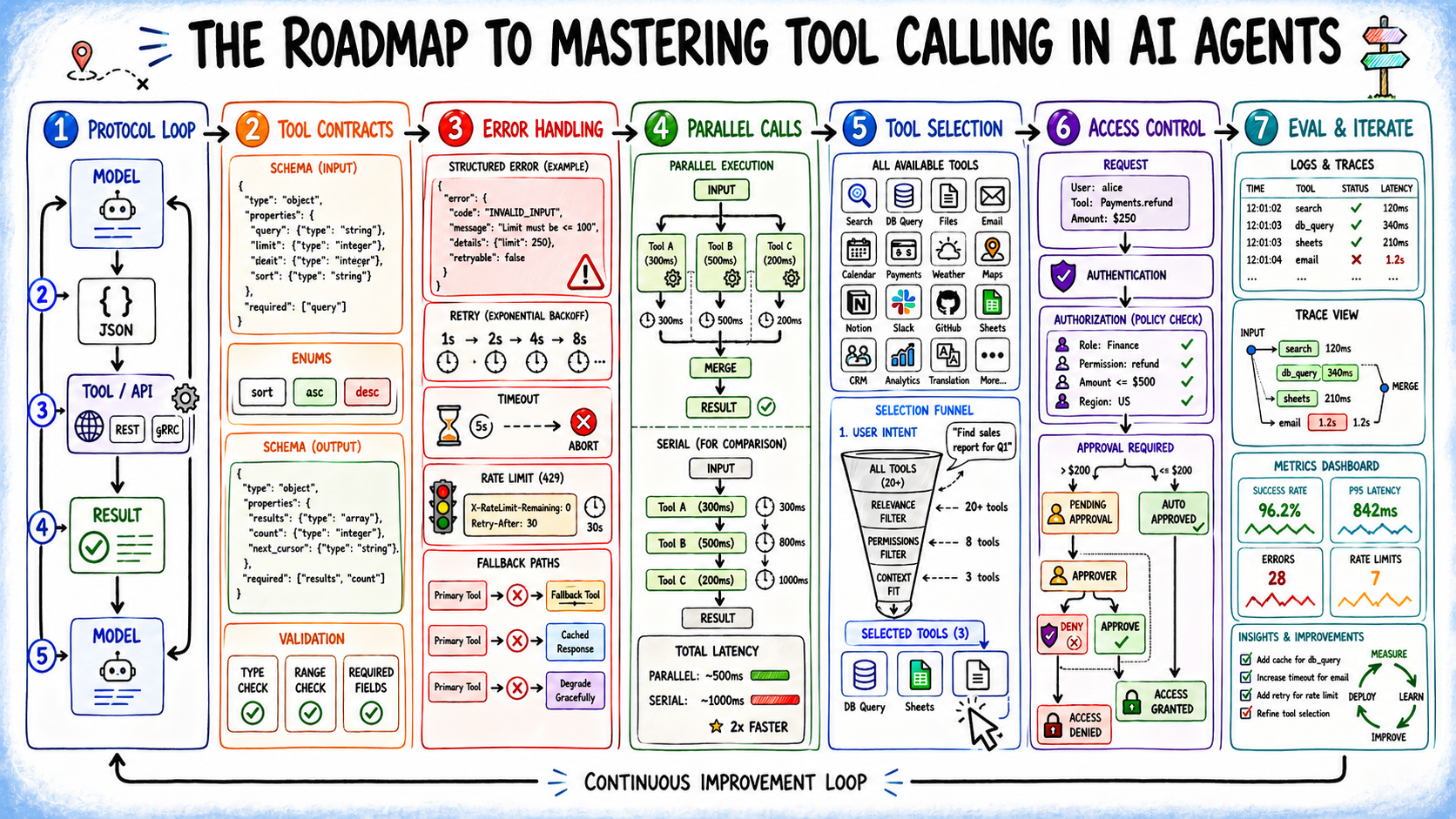

Section 2: Mastering Tool Calling in AI Agents

To move from a chatbot to a functional agent, your AI must interact with the world. This is achieved through tool calling.

Tool Calling is a capability where an LLM identifies which external function to run and generates the necessary arguments in a structured format (like JSON) to execute that task.

Agentic AI is a paradigm where AI models act as autonomous controllers that plan, use tools, and reason to achieve complex goals without step-by-step human prompting.

The Roadmap to Mastering Tool Calling in AI Agents

Source: Machine Learning Mastery

The Roadmap to Mastering Tool Calling in AI Agents

Source: Machine Learning Mastery

Step-by-Step: Implementing a Tool-Calling Agent

- Define the Toolset: Create a JSON schema that describes your functions. In 2026, schemas must be hyper-specific to avoid "hallucinated parameters."

- The Reasoning Loop: Use a ReAct (Reason + Act) pattern. The agent thinks about the goal, decides on a tool, and then observes the output.

- Output Parsing: Ensure your backend can handle the model's JSON output and execute the code safely in a sandboxed environment.

Example: A Simplified Tool Call Schema (Python/JSON)

{

"name": "get_inventory_stock",

"description": "Retrieves real-time stock levels for a specific SKU in the warehouse.",

"parameters": {

"type": "object",

"properties": {

"sku": {"type": "string", "description": "The unique identifier for the product"},

"location_id": {"type": "string", "description": "The warehouse code"}

},

"required": ["sku"]

}

}

Exercise 1: Look at the schema above. How would you modify it to include a 'priority_level' parameter for rush orders? Thinking through these constraints is how Abo-Elmakarem Shohoud recommends starting any automation project.

Section 3: The 2026 AI Security Mandate

As AI agents gain the ability to call tools—like sending emails or moving funds—they become targets for high-stakes cyberattacks. Security is no longer an afterthought; it is the foundation.

Prompt Injection is a vulnerability where an attacker provides a malicious input that trick the LLM into ignoring its original instructions and executing unauthorized commands.

To secure your agents, follow these three pillars:

- Human-in-the-Loop (HITL): For any action that involves high-value assets (over $500 or sensitive PII), require a human click to proceed.

- Adversarial Training: Use a 'Red Team' model to try and break your agent before it goes live.

- Input Sanitization: Treat every LLM output as untrusted code. Never pipe LLM output directly into a database or shell without a validation layer.

Professional Certification

If you are a developer in 2026, generic coding skills aren't enough. Pursuing an AI Security Certification (such as the CAISP - Certified AI Security Professional) is essential. These programs teach you how to defend against data poisoning and model manipulation, which are the primary threats we face this year.

Section 4: Architecture Walkthrough

Imagine you are building a "Customer Success Agent" for a global retail brand. Here is how the 2026 architecture looks:

- The Router: A small, fast model (like a 3B model) analyzes the incoming query. Is it simple? Send it to a cache. Is it complex? Send it to the Orchestrator.

- The Orchestrator: A reasoning-heavy model (like a Frontier model) determines which tools are needed (e.g.,

check_shipping,issue_refund). - The Guardrail Layer: A specialized security model checks the Orchestrator's plan against company policy.

- Execution: The tools run, the results are formatted, and a response is sent.

By using this multi-model approach, you optimize for both cost and safety—a strategy we champion at Ailigent to ensure business continuity.

Next Steps for Further Learning

- Deep Dive into RAG: Research "GraphRAG" to see how knowledge graphs are replacing simple vector databases in late 2026.

- Explore Local Inference: Download an open-source model and run it on your local hardware to understand the performance-to-privacy trade-offs.

- Stay Updated: Follow the latest security patches from the OWASP Top 10 for LLM Applications.

Key Takeaways / Bottom Line

- Context Over Performance: Stop looking for the "best" model. Define your latency, cost, and security constraints first, then select the model that fits that specific box.

- Tool Calling is the Engine: The value of AI in 2026 lies in its ability to do work via APIs, not just talk about it. Master structured JSON outputs to build effective agents.

- Security is Non-Negotiable: With the rise of autonomous agents, prompt injection and data poisoning are real business risks. Implement human-in-the-loop and sanitization layers immediately.

- Hybrid Architectures Win: Use small models for routing and large models for reasoning to create a cost-effective, high-speed AI system.

Related Videos

How to Build & Sell AI Agents in 2026: Ultimate Beginner’s Guide

Channel: Liam Ottley

Google’s 2026 AI Agent Tool “Computer Use” Just Dropped — Here’s the Opportunity

Channel: Nick Ponte