Beyond the Chatbot: Engineering Reliability in the 2026 AI Economy

Beyond the Chatbot: Engineering Reliability in the 2026 AI Economy

It is January 12, 2026, and the honeymoon phase with General AI is officially over. For business owners and tech leaders, the conversation has shifted. We are no longer asking, "What can AI say?" Instead, the critical questions are: "What can AI do reliably?" and "How do we ensure it doesn't fail when the stakes are high?"

Illustration

Source: Dev.to AI

Illustration

Source: Dev.to AI

This week, two major stories—one from a pioneering developer and another from tech giant Google—provide a perfect snapshot of the current landscape. They highlight the incredible potential of autonomous agents and the sobering reality of AI limitations in 2026.

The Rise of the Specialist Agent: Healthcare as a Blueprint

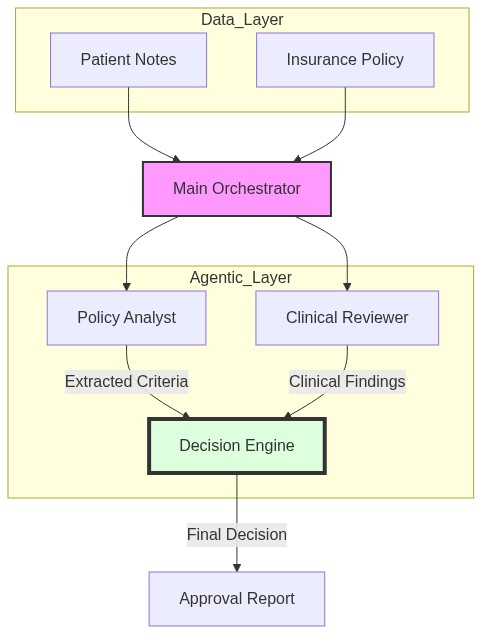

One of the most promising developments this year is the move toward Multi-Agent Orchestration. A recent experiment in the medical field demonstrates this perfectly: the creation of an Autonomous Medical Pre-Authorization Agent.

In the past, medical pre-authorization—the process of getting insurance approval before a procedure—was a manual, soul-crushing bottleneck for both doctors and patients. By 2026, we are seeing this replaced by a collaborative "team" of AI agents, each with a specific role:

- The Policy Analyst: Parses complex, often contradictory insurance documents.

- The Clinical Reviewer: Analyzes patient clinical notes and history.

- The Decision Engine: Synthesizes the data to provide an approval or denial, backed by an explainable rationale.

Business Insight: This isn't just about healthcare. Whether you are in legal, finance, or logistics, the 2026 strategy is to decompose complex workflows into specialized agents. This "divide and conquer" approach allows for greater accuracy and easier debugging than relying on a single, monolithic LLM.

The Reality Check: Google’s Medical AI Retreat

While developers are building specialized agents, Google recently faced a significant setback that serves as a warning for all AI-first companies. The tech giant had to pull several AI overviews for medical searches after investigations revealed they were providing dangerously misleading information.

Illustration

Source: The Verge AI

Illustration

Source: The Verge AI

This highlights the "Accuracy Gap" that still exists in 2026. When it comes to low-stakes tasks, like summarizing a recipe, AI is flawless. But in high-stakes environments—where a wrong answer can lead to physical harm or financial ruin—generative AI remains a risk if not properly governed.

For business leaders, this reinforces a vital 2026 truth: Information retrieval is not intelligence. Just because a model can find and summarize information doesn't mean it understands the nuance of safety. If your business operates in a regulated industry, your AI strategy must prioritize fact-checking layers and human-in-the-loop protocols.

The Evolution of the Workspace: From Inbox to Action-box

On the productivity front, we are seeing a massive shift in how we interact with our core tools. Google’s new "AI Inbox" for Gmail is a glimpse into the future of work in 2026. Instead of a chronological list of emails, users are presented with a dynamic list of to-dos, summarized topics, and urgent action items generated automatically.

We are moving away from "managing software" toward "managing outcomes." In 2026, an effective manager shouldn't spend four hours a day in an inbox. They should be reviewing the summaries and decisions prepared by their AI workspace.

Actionable Takeaways for 2026

How should you navigate these trends in your organization today?

1. Shift from Chatbots to Workflows

Stop trying to build a better chatbot. Start building a multi-agent workflow. Identify a process in your company that requires three different skill sets (e.g., sales outreach, lead qualification, and CRM logging) and build an agent for each step.

2. Implement the "Explainability Standard"

Follow the lead of the medical pre-auth experiment. Every AI-generated decision in your business must come with a "Rationale Log." If the AI cannot explain why it reached a conclusion based on specific source data, that conclusion cannot be trusted.

3. Define Your "High-Stakes" Zones

Audit your AI usage. Where are you using AI where a 2% error rate is acceptable? (Marketing copy, internal brainstorming). Where is a 0.1% error rate catastrophic? (Medical advice, legal contracts, financial reporting). In high-stakes zones, AI should assist, but humans must authorize.

4. Optimize for "Output Management"

With tools like the AI Inbox becoming standard, the bottleneck is no longer doing the work, but verifying the work. Train your team not on how to write emails, but on how to audit AI-generated tasks for strategic alignment.

Conclusion

As we move further into 2026, the winners won't be those who have the fastest AI, but those who have the most reliable systems. The experiment with medical pre-authorization shows us the path forward: specialized, modular agents working in tandem. Meanwhile, Google’s setbacks remind us that without rigorous guardrails, even the most advanced AI can become a liability.

At the portfolio of Abo-Elmakarem Shohoud, we believe that automation is not about replacing human judgment—it's about scaling it. Build your agents, verify their logic, and let 2026 be the year your business moves from manual labor to strategic orchestration.

Related Videos

5 Types of AI Agents: Autonomous Functions & Real-World Applications

Channel: IBM Technology

Generative vs Agentic AI: Shaping the Future of AI Collaboration

Channel: IBM Technology