The 2026 Architect’s Blueprint: Mastering High-Performance AI Pipelines and IP Protection

By Abo-Elmakarem Shohoud | Ailigent

Introduction: The State of AI Engineering in 2026

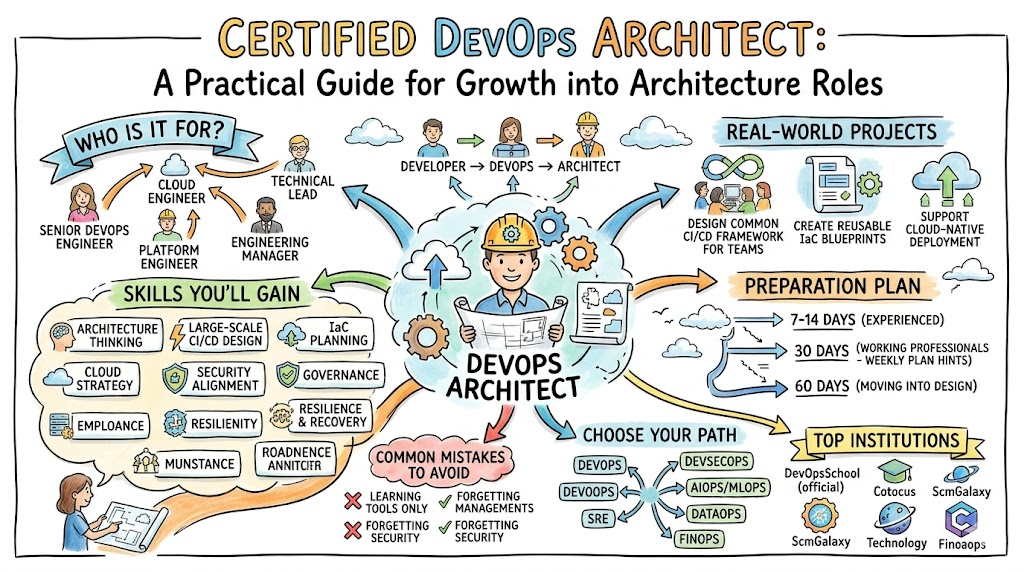

Certified DevOps Architect: A Practical Guide for Growth into Architecture Roles

Source: Dev.to AI

Certified DevOps Architect: A Practical Guide for Growth into Architecture Roles

Source: Dev.to AI

As we navigate the second quarter of 2026, the technological landscape has shifted from simple model experimentation to the industrial-scale deployment of Agentic AI. The "complexity gap" that defined the mid-2020s has widened, leaving many organizations struggling to bridge the divide between local prototypes and global, resilient infrastructure. Today, being a tech professional or business owner requires a tri-fold mastery: hardware-level optimization, architectural resilience, and strategic asset protection.

In this tutorial, we will explore how to build and scale AI systems that are not only performant—leveraging the raw power of NVIDIA H100s—but also architecturally sound and commercially protected. At Ailigent, we believe that the true value of AI lies in the intersection of efficient code and secure business logic.

Learning Objectives

By the end of this guide, you will be able to:

- Understand the fundamentals of CUDA programming for modern Hopper GPUs.

- Apply DevOps Architecture principles to eliminate silos in AI deployment.

- Implement a strategy to monetize AI "Secret Sauce" without exposing core IP.

- Navigate the 2026 landscape of distributed AI systems.

Phase 1: High-Performance Foundations with CUDA

To build competitive AI in 2026, you must understand the hardware. CUDA is a parallel computing platform and programming model created by NVIDIA that allows software to use various types of GPU units for general-purpose processing.

With the ubiquity of NVIDIA H100s, optimizing for the Hopper architecture is no longer optional. The H100 introduces the Transformer Engine, specifically designed to accelerate AI workloads. To truly leverage this, engineers are moving toward WGMMA (Warpgroup Matrix Multiply-Accumulate) pipelines.

Practical Insight: Why WGMMA Matters

Traditional matrix multiplication often hits a bottleneck in memory bandwidth. In 2026, we use Cutlass optimizations to ensure that data flows seamlessly between shared memory and the registers. This reduces latency by up to 40% compared to generic kernels.

Try it Yourself (Conceptual Exercise): Imagine you are optimizing a Large Language Model (LLM) inference engine. Instead of standard loops, visualize your data in 128x128 tiles. How would you schedule these tiles across 132 Streaming Multiprocessors (SMs) on an H100? This mental mapping is the first step toward becoming a CUDA expert.

Phase 2: Mastering the DevOps Architecture (CDA)

Scaling high-performance code requires a robust framework. DevOps Architecture is the design and implementation of automated software delivery pipelines that integrate development, operations, and security into a single, resilient lifecycle.

In 2026, the role of the Certified DevOps Architect (CDA) has evolved. It is no longer just about CI/CD; it is about managing the "cognitive load" of distributed systems. As Abo-Elmakarem Shohoud often emphasizes in Ailigent consultations, a system that cannot recover itself is a liability, not an asset.

The Post-Silo World

We have moved past the era where developers simply "throw code over the wall." A modern AI Architect must ensure that observability is baked into the infrastructure. This includes monitoring GPU temperatures, memory fragmentation, and model drift in real-time.

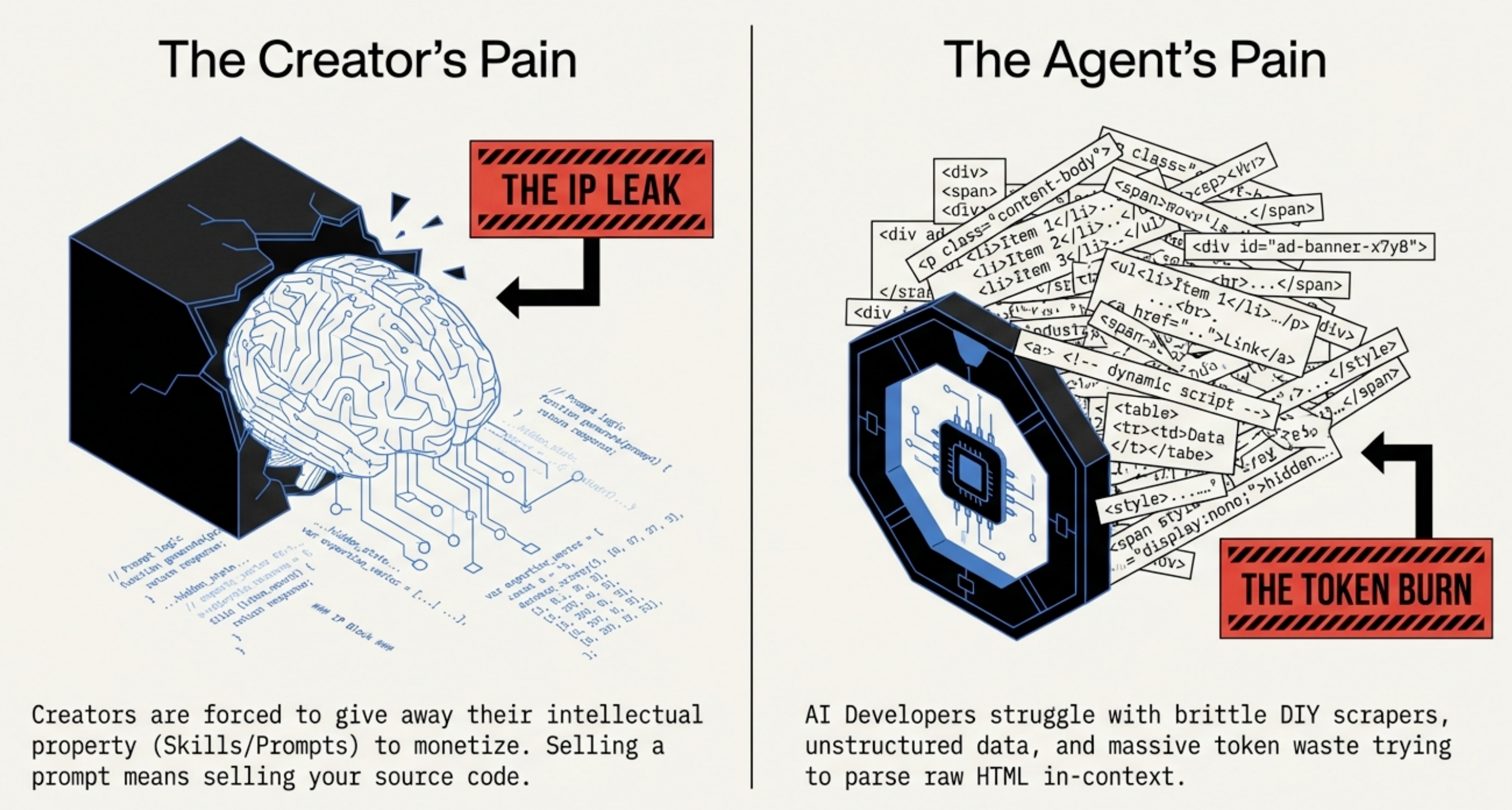

Stop Open-Sourcing Your Secret Sauce: The New Paradigm for AI Creators

Source: Dev.to AI

Stop Open-Sourcing Your Secret Sauce: The New Paradigm for AI Creators

Source: Dev.to AI

| Feature | Traditional DevOps (2022) | AI-Native DevOps (2026) |

|---|---|---|

| Primary Metric | Uptime / Latency | Token Throughput / Inference Cost |

| Scaling | Horizontal (CPU/RAM) | Heterogeneous (GPU/TPU Clusters) |

| Security | Firewall / IAM | Prompt Injection Shielding / IP Protection |

| Pipeline | Linear CI/CD | Feedback-Loop Data Flywheels |

Phase 3: Protecting the "Secret Sauce"

One of the biggest challenges AI creators face in 2026 is the "IP Trap." If you open-source your most valuable algorithms to integrate them with AI Agents, you risk losing your competitive advantage. However, if you keep them locked away, you miss the booming Agentic AI economy.

Agentic AI is a paradigm where AI systems are designed to act as autonomous agents, capable of using tools, making decisions, and executing multi-step tasks to achieve a goal.

The New Paradigm: API-First Monetization

Instead of open-sourcing your raw Python scripts—whether they are crypto sentiment scorers or e-commerce price predictors—the 2026 standard is to wrap these insights into secure, high-performance microservices. This allows AI Agents to "rent" your intelligence without ever seeing the underlying code.

Step-by-Step Walkthrough: Securing an AI Logic Asset

- Containerize: Wrap your specialized algorithm (e.g., a custom CUDA-accelerated scorer) in a lightweight Docker container.

- Abstract: Create a robust API layer using FastAPI or Go.

- Authenticate: Use OIDC (OpenID Connect) to ensure only authorized AI Agents can call your service.

- Monitor: Implement rate limiting and logging to track usage and prevent reverse-engineering attempts.

Advanced Concept: Building Resilience in Distributed Systems

As we scale, we encounter the "complexity gap." A 2026 DevOps Architect uses Chaos Engineering to test AI pipelines. This involves intentionally injecting failures—such as killing a GPU node during a training run—to ensure the system self-heals without data loss.

Code Example: A Simple Health Check for AI Nodes (Python/Bash)

import torch

def check_gpu_health():

if not torch.cuda.is_available():

return "FAIL: No GPU detected"

device_count = torch.cuda.device_count()

for i in range(device_count):

props = torch.cuda.get_device_properties(i)

if props.total_memory < 80000000000: # 80GB for H100

return f"FAIL: Node {i} under-provisioned"

return "PASS: Infrastructure Ready"

# Integrate this into your CDA automated health-check pipeline

Summary of the 2026 Strategy

The integration of low-level optimization (CUDA), high-level structural integrity (DevOps), and strategic monetization (IP Protection) forms the backbone of a successful AI enterprise. By focusing on these three pillars, you transition from a simple developer to an Architect of the future.

Key Takeaways

- Optimize for Hardware: Use WGMMA and Cutlass to unlock the full potential of NVIDIA H100 GPUs; generic code is too expensive in 2026.

- Bridge the Complexity Gap: Adopt a DevOps Architect mindset to manage the cognitive load of distributed AI systems and ensure 99.99% resilience.

- Protect Your IP: Never open-source your "secret sauce" algorithms; instead, monetize them through secure API layers for the Agentic AI ecosystem.

- Continuous Learning: The transition from engineering to architecture requires understanding both the "how" (code) and the "why" (business value).

Next Steps for Further Learning

- Deep Dive into Cutlass: Explore NVIDIA's GitHub repository for the latest 2026 optimizations for Hopper and Blackwell architectures.

- Certification: Consider pursuing a Certified DevOps Architect (CDA) track to formalize your architectural skills.

- Ailigent Consultations: Reach out to Abo-Elmakarem Shohoud for personalized strategies on automating your AI infrastructure and protecting your digital assets.

Bottom Line: In 2026, the winners aren't just those with the best models, but those with the most resilient and well-protected execution pipelines.

Related Videos

What EXACTLY is Kubernetes?! #tech #coding #techeducation

Channel: Tiff In Tech