The Hidden Price of AI Connectivity: Navigating MCP 'Token Bloat' in 2026

The Hidden Price of AI Connectivity: Navigating MCP 'Token Bloat' in 2026

It is early 2026, and the dream of fully autonomous AI agents is no longer a futuristic concept—it is a daily business reality. We’ve spent the last year integrating everything: our GitHub repositories, Notion databases, Slack channels, and AWS infrastructures into our Large Language Models (LLMs) using the Model Context Protocol (MCP).

Illustration

Source: Dev.to AI

Illustration

Source: Dev.to AI

However, as we stand here in January 2026, many CTOs and automation engineers are hitting a wall they didn't anticipate. We called it the "Universal Connector" era, but it’s quickly turning into the "Token Bloat" era.

The "Token Tax" You Didn’t Know You Were Paying

When we first started using MCP servers, the promise was simple: give your AI tools, and it will give you results. But recent data from the developer community has revealed a staggering hidden cost.

Take the standard GitHub MCP server, for example. To simply "know" how to interact with GitHub—understanding its 93 different tool definitions—the LLM (like Claude 3.5 or its 2026 successors) must consume approximately 55,000 tokens.

Let that sink in. Before you have typed a single prompt, before the AI has analyzed a line of your code, you have already burned 55,000 tokens of your context window. If you stack a few more servers—say, Notion for documentation and Jira for project management—you are looking at over 100,000 tokens of "overhead" just to establish the connection.

Why This Matters for Your Business in 2026

For a business owner, this isn't just a technical quirk; it’s a productivity and financial drain:

- Context Dilution: LLMs have finite attention. When 50% of the context window is filled with "how to use GitHub," the AI becomes more prone to hallucinations and misses the specific nuances of your actual business problem.

- Increased Latency: More tokens mean longer processing times. In 2026, speed is a competitive advantage. Waiting 30 seconds for an agent to "think" through its tool definitions is unacceptable.

- Surging Costs: Even as token prices have dropped since 2024, the sheer volume of overhead tokens in agentic workflows is bloating API bills.

Illustration

Source: Dev.to AI

Illustration

Source: Dev.to AI

The Shift to Prompt Orchestration (SLOP)

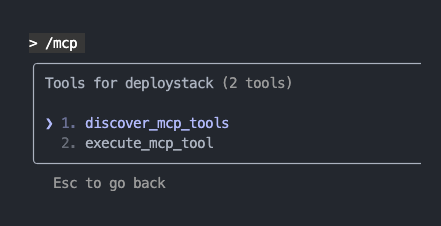

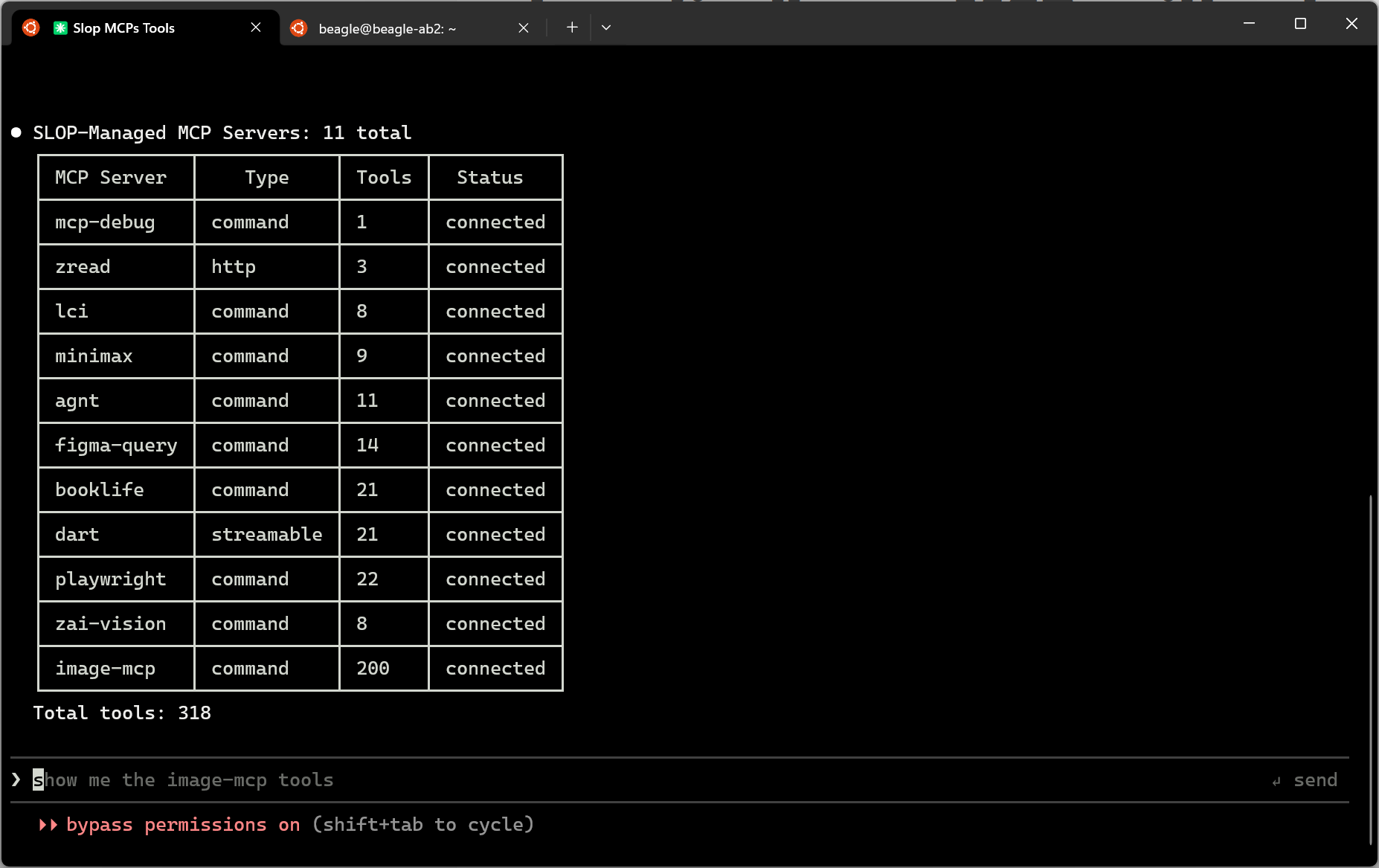

We are seeing a pivot in the industry. Developers are moving away from "raw" MCP connections toward more sophisticated orchestration. A recent innovation that has gained traction is the Simple Language for Orchestrating Prompts (SLOP).

Instead of overwhelming an LLM with every possible tool definition, SLOP acts as a specialized layer. It allows developers to write custom, lightweight instructions that tell the AI exactly which tool to use and when, without needing the full "instruction manual" for every server in every turn of the conversation.

This is the future of efficient automation: Selective Intelligence. Instead of a general-purpose agent that knows everything but masters nothing, we are building focused agents that load only the tools necessary for the specific task at hand.

Staying Ahead: The AWS Ecosystem and Beyond

As we navigate these technical challenges, community involvement remains the best way to stay informed. For those of us deep in the cloud ecosystem, the AWS Community Builder 2026 applications have just opened. This program is a prime example of where these conversations are happening—where the top 1% of experts are figuring out how to balance the massive scale of AWS services with the efficiency requirements of modern AI.

If you are an automation professional, joining such communities is no longer optional. It is where the solutions to "Token Bloat" and "Agentic Latency" are being built in real-time.

Actionable Takeaways for 2026

How should your business handle AI automation right now? Here is a roadmap:

- Audit Your MCP Servers: Don’t just connect everything. If your agent is writing code, it probably doesn't need its Slack or Spotify MCP servers active in the same session. Use "Context-Aware Loading."

- Implement an Orchestration Layer: Look into languages like SLOP or custom TypeScript wrappers to filter tool definitions before they reach the LLM.

- Monitor 'Pre-Prompt' Token Usage: Use observability tools to track how many tokens are being spent on tool definitions versus actual logic. If your overhead exceeds 30% of your average prompt, it’s time to optimize.

- Invest in Specialized Small Models: For simple tool-calling tasks, consider using smaller, faster models that are fine-tuned for specific MCPs, rather than a massive frontier model for everything.

Conclusion

The goal of 2026 isn't to have the most connected AI; it’s to have the smartest connected AI. By understanding the hidden costs of MCP tool overload and moving toward intelligent orchestration, we can ensure our automation remains fast, accurate, and—most importantly—profitable.

Are you feeling the slowdown in your AI agents? Let’s talk about optimizing your automation architecture for the 2026 landscape.